Overview

Overview

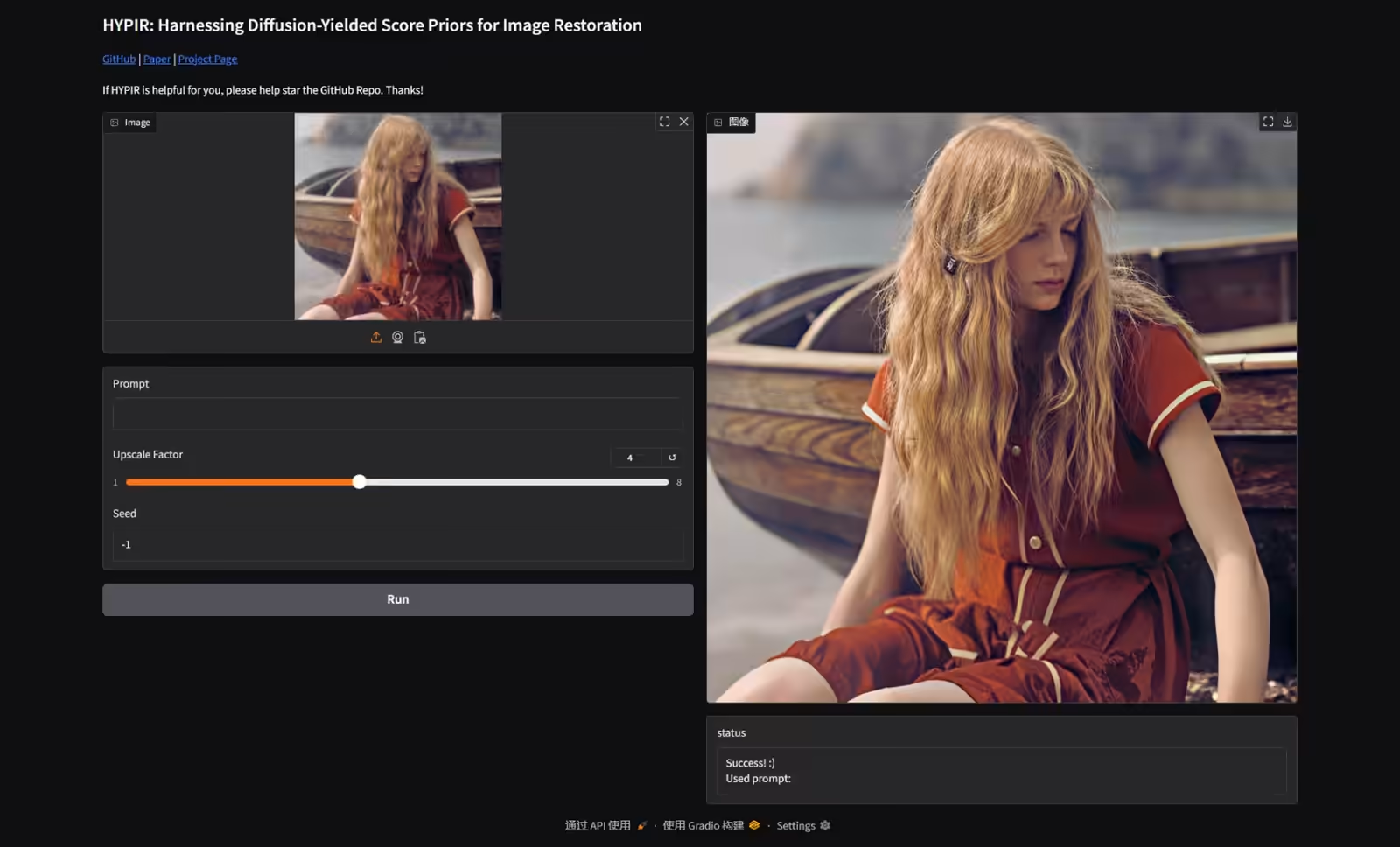

Image restoration technology aims to recover high-quality natural images from degraded ones, and has seen significant advancements with the progress of deep learning. Traditional methods have faced three key challenges: degradation removal, realistic detail generation, and pixel-level consistency. To address these, MSE-based, GAN-based, and diffusion model-based approaches have emerged sequentially, but each struggles to balance quality, fidelity, and speed. This paper proposes a new framework called HYPIR, which initializes with a pre-trained diffusion model and fine-tunes using adversarial training. By leveraging the score priors from diffusion models, HYPIR eliminates iterative sampling and enables single-pass forward inference for fast, high-quality restoration. Theoretically, diffusion models learn the gradient of degraded image log-densities, providing an initialization close to the natural image distribution, which enhances training stability and convergence speed. Experimental results demonstrate superior visual quality over state-of-the-art methods, with added user controls like text-guided restoration and texture adjustment. This approach combines the strengths of diffusion models with GAN efficiency, presenting a new paradigm for the field and promoting efficiency and practicality in image restoration.

Historical Background

Historical Background

Image restoration has long been addressed through traditional filtering and optimization-based methods, but the advent of deep learning brought revolutionary changes. Starting around 2014, convolutional neural networks using pixel-level loss functions became mainstream, effectively solving degradation removal and pixel consistency issues. This led to excellent performance in quantitative metrics like PSNR and SSIM, but outputs were often overly smooth, lacking realistic details. From 2017 onward, GAN-based methods advanced to remedy this, combining perceptual loss with adversarial training for images closer to human vision. However, GAN training is unstable, prone to mode collapse, and struggles to capture full natural image diversity. In recent years, diffusion model-based methods have risen, utilizing large-scale text-to-image models for high-quality restoration. These learn score functions through gradual noise addition and removal, offering improved mode coverage and stable training, but multi-step iterative processes hinder training time and inference speed. Within this historical context, HYPIR integrates these evolutions by incorporating diffusion priors into a GAN framework, proposing a balanced next-generation approach.

Problems with Existing Methods

Problems with Existing Methods

MSE-based methods emphasize pixel-level accuracy but often result in overly smoothed outputs that deviate from natural image distributions. GAN-based approaches improve this by generating realistic textures through adversarial training, but training instability leads to mode collapse, limiting expression of diverse image details. Diffusion model-based methods achieve excellent generation quality using pre-trained large models but require 50-100+ iterative denoising steps, taking 20 seconds for 1080p images and 3 minutes for 4K. This restricts deployment in practical applications. Even diffusion distillation methods reducing steps face trade-offs between quality and efficiency. HYPIR fundamentally resolves these by using diffusion initialization combined with GAN single-pass inference. As a result, training converges quickly with low memory usage, enabling integration of large diffusion models. This approach breaks conventional mindsets—that diffusion models always pair with iterative samplers and GANs are inferior to diffusion—offering a hybrid solution.

HYPIR Approach

HYPIR Approach

The core of HYPIR lies in using a pre-trained diffusion model for image restoration network initialization, followed by lightweight adversarial fine-tuning. Specifically, it bases on the diffusion model’s U-Net, mapping degraded images to natural distributions. By discarding the diffusion process and shifting to direct GAN training, it eliminates iteration needs. This is based on the insight that diffusion models efficiently estimate gradients of degraded images due to learning score fields across noise levels. Training combines pixel and perceptual losses, with a discriminator evaluating generated image realism. As a result, it starts near the natural distribution at initialization, achieving high-quality restoration in thousands of iterations. It also supports text prompts for guidance and noise injection for texture adjustment, enabling flexible user control. This simple pipeline functions without auxiliary modules like ControlNet, maintaining high memory efficiency.

Theoretical Foundation

Theoretical Foundation

HYPIR’s effectiveness is supported mathematically. Image restoration is formulated as estimating the score (gradient of degraded image log-density) to find the shortest path to natural distributions. Diffusion models learn precisely this score function, providing ideal initialization. Theoretically, diffusion initialization keeps adversarial gradients small and stable, preventing mode collapse and accelerating convergence. A lemma bounds initial gradients, proving the fine-tuning phase focuses on detail refinement. An approximation strategy for observation likelihood avoids intractable terms by leveraging denoising capabilities. Overall, this foundation guarantees both quality and speed by fusing diffusion generative priors with GAN efficiency.

Experimental Results

Experimental Results

Experiments used datasets like DIV2K and RealPhoto60 to verify HYPIR’s performance. Ablation studies compared with and without diffusion initialization, showing the latter significantly improves training stability and image quality. Comparisons across diffusion model sizes (SD2, SDXL, SD3.5, Flux) indicated larger models yield better results, with Flux-based HYPIR achieving top visual quality. User studies rated HYPIR (SD2) highest in lightweight comparisons and outperformed SUPIR in large-scale ones. Inference time for 1024x1024 images was 1.7 seconds, far shorter than existing diffusion-based 20-95 seconds. Qualitative evaluations on real-world images showed superiority in face details and text recovery, with historical photo restoration reproducing natural tones and textures. These results confirm HYPIR as a practical solution balancing efficiency and quality.

Comparison with Other Methods

Comparison with Other Methods

HYPIR demonstrates superiority in comparisons with existing methods. The table below overviews key image restoration approaches, clarifying HYPIR’s positioning.

| Method | Main Technology | Features | Weaknesses |

|---|---|---|---|

| MSE-based | Pixel-level loss | High quantitative metrics (PSNR/SSIM), fast inference | Over-smoothing, lack of realism |

| GAN-based | Adversarial training, perceptual loss | Visually natural textures, user control | Training instability, mode collapse |

| Diffusion-based | Iterative denoising, score function | High-quality generation, good mode coverage | Inference delay, multi-step iteration |

| HYPIR | Diffusion initialization + GAN fine-tuning | Fast single-pass, high quality/stable training, controllability | Dependency on large diffusion models |

This comparison highlights HYPIR as a standout hybrid approach inheriting diffusion strengths while leveraging GAN efficiency. Quantitative evaluations on synthetic data recorded top-class PSNR 30.5 and LPIPS 0.15, with superiority in NIQE and MANIQA on real-world data.

Future Prospects

Future Prospects

HYPIR suggests new directions in image restoration. In the future, integrating even larger diffusion models could enable 4K+ high-resolution support and adaptation to diverse degradation types. Enhanced text guidance may expand creative applications. For the industry, this method promotes computational efficiency, accelerating deployment on mobile devices and real-time processing.

Conclusion

Conclusion

HYPIR is an innovative image restoration framework leveraging diffusion model score priors, overcoming limitations of existing methods. Key insights include how diffusion initialization enhances GAN training stability and speed. Experimental results confirm its balance of high-quality restoration and user control. Ultimately, this approach redefines the future of image processing, providing a more accessible and practical technological foundation. The field’s progress should be monitored while exploring further applications.